AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Entropy calculator8/30/2023

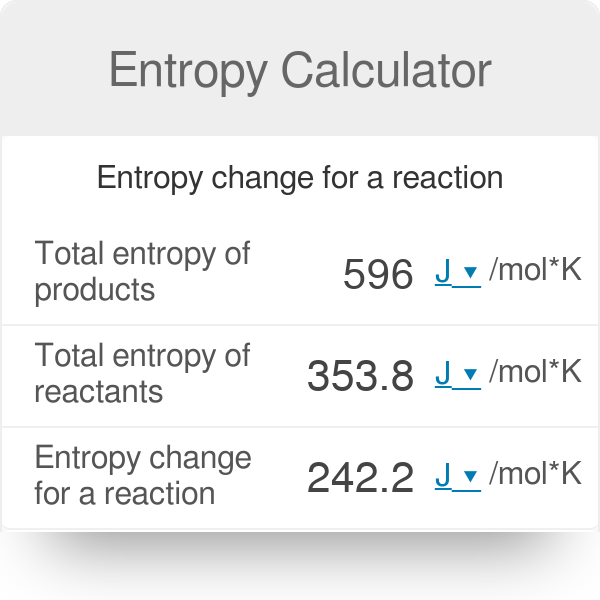

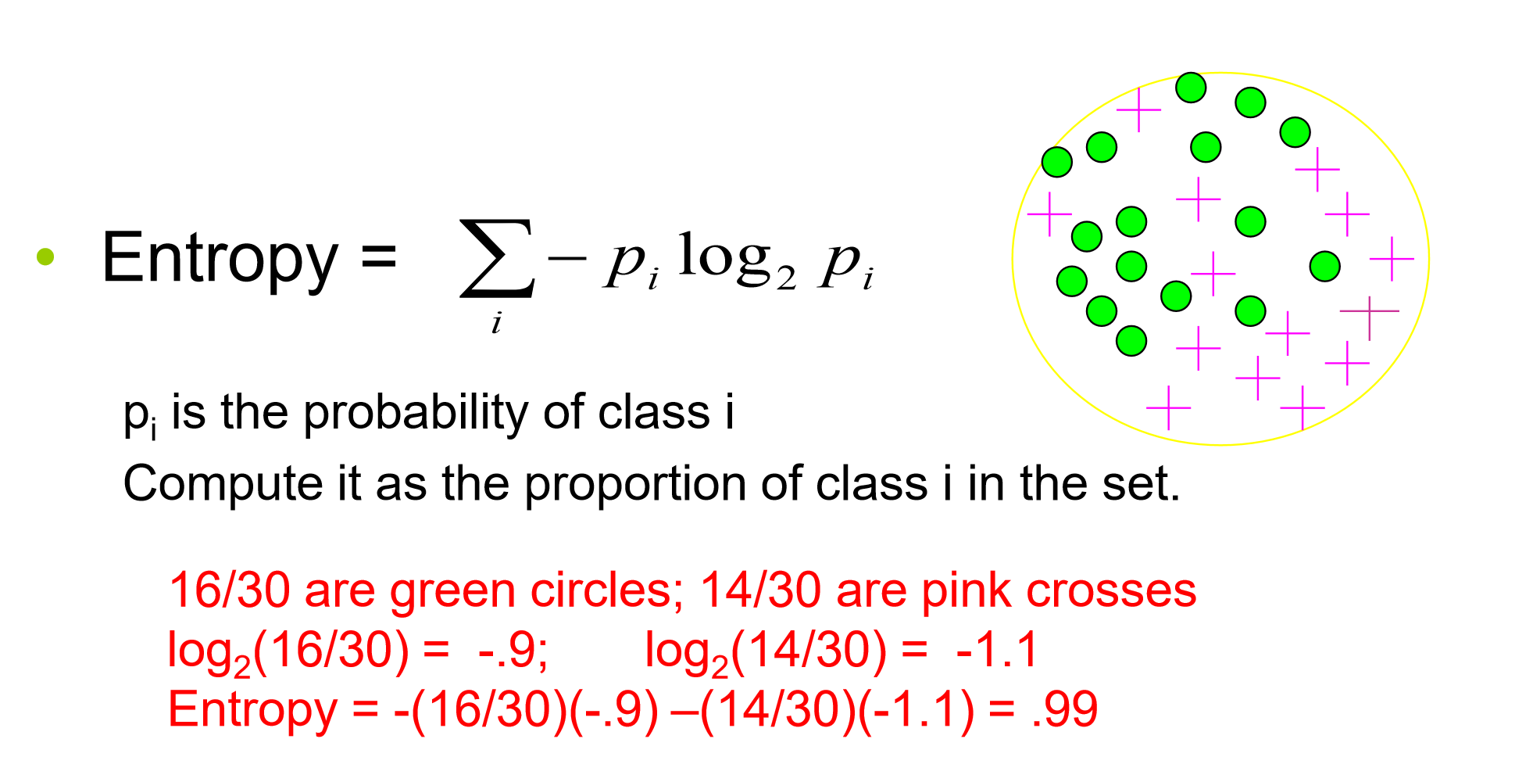

For more information you may want to check this Decision tree learning article on Wikipedia. 's Entropy (S) Calculator is an online chemical engineering tool to measure the degrees of disorder & randomness of the moles due to chemical reaction, in both US customary & metric (SI) units. Of course, there are many implications regarding non-robustness, overfitting, biasing, etc. From the other side, we have just used a subset of combinations (14 examples) to train our algorithm (by building a decision tree) and now it can classify all other combinations without our help. Of course you can, but even for this small example, the total number of combinations is 3*2*2*3=36. You might wonder why we need a decision tree if we can just provide the decision for each combination of attributes. This particular calculator uses Information Gain. You can use different metrics as split criterion, for example, Entropy (via Information Gain or Gain Ratio), Gini Index, Classification Error. Note that this decision tree does not need to check the "Temperature" feature at all! If the "Outlook" is "Rainy", then it needs to check the "Windy" attribute. If the "Outlook" is "Overcast", then it is "Yes" to "Play" immediately. If the answer is "Normal", then it is "Yes" to "Play". If the answer is "High", then it is "No" for "Play". If the answer is "Sunny", then it checks the "Humidity" attribute. The generated decision tree first splits on "Outlook". Then the process continues until we have no need to split anymore (after the split all the remaining samples are homogeneous, in other words, we can identify the class label), or there are no more attributes to split. This attribute is used as the first split.

So, by analyzing the attributes one by one, the algorithm should effectively answer the question: "Should we play tennis?" Thus, in order to perform as few steps as possible, we need to choose the best decision attribute on each step – the one that gives us the maximum information. Let's look at the calculator's default data.

The paths from root to leaf represent classification rules. whether a coin flip comes up heads or tails), each branch represents the outcome of the test, and each leaf node represents a class label (decision taken after computing all attributes). Programmers deal with a particular interpretation of entropy called programming complexity: learn more at our cyclomatic complexity calculator.A decision tree is a flowchart-like structure in which each internal node represents a "test" on an attribute (e.g. A keen photographer We’ll help you plan out a perfect star time lapse. A travel junkie Use our calculator to choose the optimal sunscreen SPF for holidays in Bali. From an ecological point of view, it is best if the terrain is species-differentiated. Congratulations, you just found the most random collection of calculators available on the Internet Are you a hardcore geek We’ll help you pick a motor for your drone. The higher the entropy of your password, the harder it is to crack.Įcologists use entropy as a diversity measure. It takes into account the number of characters in your password and the pool of unique characters you can choose from (e.g., 26 lowercase characters, 36 alphanumeric characters). It's a measurement of how random a password is. You may also come across the phrase ' password entropy'. In information theory, the entropy symbol is usually the capital Greek letter for ' eta' - H. It's said to have been chosen by Clausius in honor of Sadi Carnot (the father of thermodynamics). Our Shannon Entropy calculator is a free tool that helps you calculate the Shannon Entropy of a given sequence of data, which measures the amount of. In physics and chemistry, the entropy symbol is a capital S.

Before, it was known as "equivalence-value". It comes from the Greek "en-" (inside) and "trope" (transformation). The term "entropy" was first introduced by Rudolf Clausius in 1865. Know you know how to calculate Shannon entropy on your own! Keep reading to find out some facts about entropy!

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed